Background:

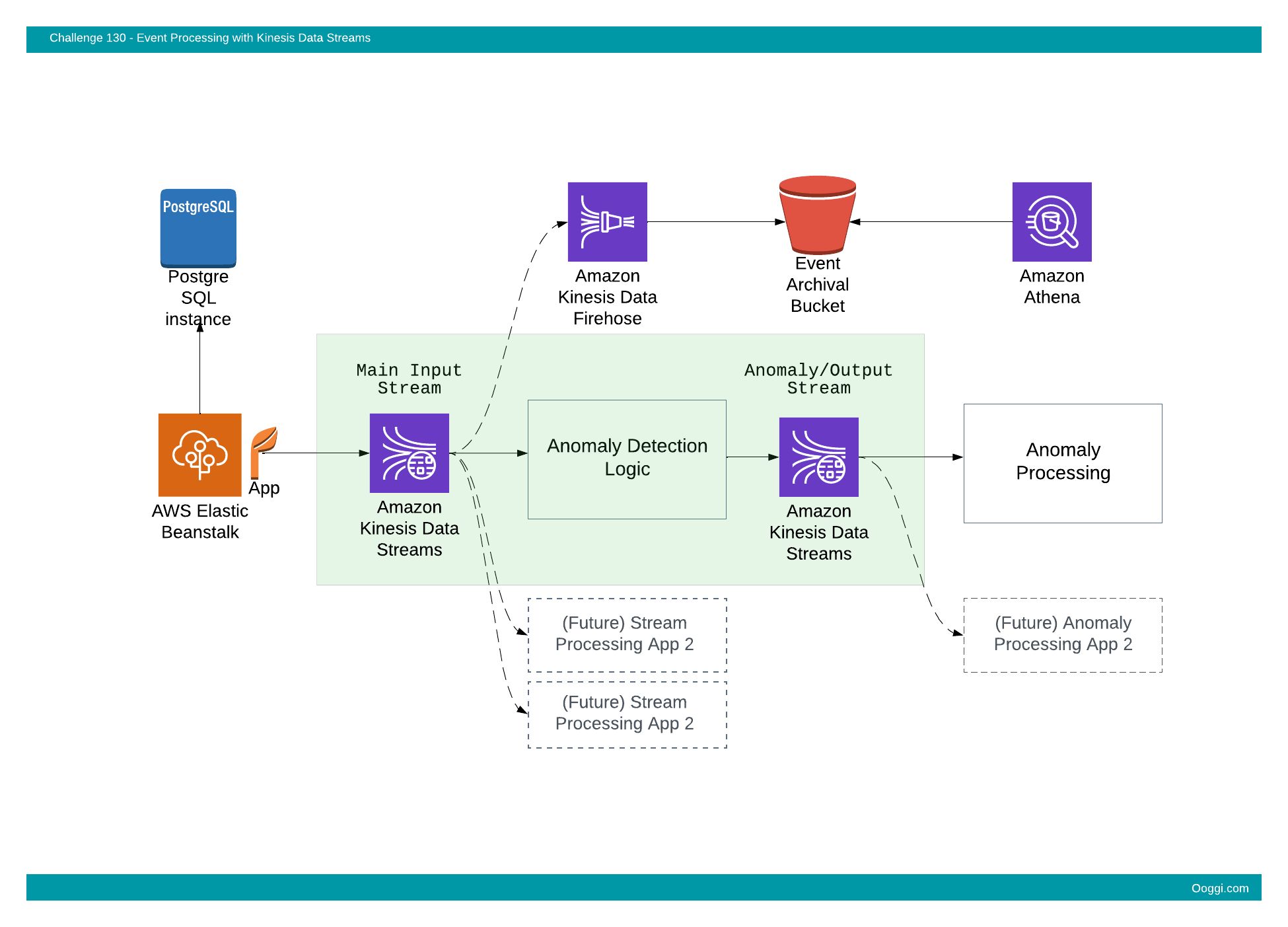

A team supporting an online marketplace platform is looking to upgrade their event ingestion and processing capabilities. Currently various events are written to their main relational database (PostgreSQL).

Lately, a number of challenges came up around this design.

- With the ever increasing amount of events, it’s getting more difficult to scale the database. It was vertically scaled to a bigger instance a number of times, but that is only a temporary solution. At some point even the biggest machine won’t do.

- Many teams need to respond to events in near real time. Having many additional applications continuously polling the database for the most recent changes is not practical in this case.

- As this is the main production database, it’s handled with great care and different requests for integration go through a lengthy approval process by the database team. This frustrates other teams and slows down progress.

One important use case is that of fraud/anomaly detection. The business is in urgent need of reviewing all transactions in near real time and reporting on any that meet specific criteria. This business logic will first be implemented as a set of manually coded rules, but will later be replaced by a machine learning model.

Due to the above reasons the team has decided to move forward with the implementation of an event ingestion system that will allow various stream processing applications to consume the events in parallel with sub-second latency.

The service picked for the task is Amazon Kinesis Data Stream and the first application prototype will be the anomaly fraud detection system.

Bounty:

- Points: 30

- Path: Cloud Engineer

Difficulty:

- Level: 3

- Estimated time: 2-12 hours

Deliverables:

- A system that will provide a Kinesis Data Stream for ingestion and write anomalous events to an output Kinesis Data Stream after applying specific anomaly detection logic

Prototype description:

An Amazon Kinesis Data Stream will be used for ingestion of events.

Events written into the main ingestion stream will be read by the anomaly detection service that will apply the required logic. Events that will be detected as anomalous will be written to an output Kinesis Data Stream.

An IAM Role with the relevant IAM policy is needed to generate temporary credentials that will allow the testing system to access both the main ingestion Kinesis stream and the output / anomaly Kinesis Data Stream.

The test will write different events into the main ingestion stream and will expect to find only anomalous events in the output / anomaly stream.

Requirements:

- The prototype shall ingest events via a Kinesis stream and write the events that are detected as anomalous to an output Kinesis stream

-

The main ingestion Kinesis stream name shall start with:

main-input-stream

-

The output / anomaly Kinesis stream name shall start with:

anomaly-stream

- All resources shall be deployed in the Ireland (eu-west-1) region

-

Temporary IAM credentials shall be provided for the test that will allow the following API actions on the relevant Kinesis streams

Main input stream:kinesis:PutRecord kinesis:PutRecords

Anomaly/output stream:

kinesis:GetRecords kinesis:GetShardIterator kinesis:DescribeStream kinesis:ListShards kinesis:ListStreams

-

The client application will write events (JSON) with the following structure into the main ingestion Kinesis stream:

{"event_id" : "[Event ID]" , "transaction_amount" : [Transaction amount]}Example event:

{"event_id" : "12345678" , "transaction_amount" : 120} - Events with a transaction_amount value higher than 100, shall be written into the output/anomaly stream

- No action is required at this time on events with a transaction_amount equal or lower than 100

- The original event (with the same structure and JSON data types) is expected in the output/anomaly stream (only relevant events)

- The maximum time from event ingestion into the main ingestion stream to the relevant event being available in the output/anomaly stream shall be 2 seconds

Your mission, if you choose to accept it,

is to deploy an ingestion service with Kinesis with a custom event processing component and an output Kinesis stream.